Web application with a single entry point

Published on 2015-01-01.

I stumbled upon an article called "Writing modern day PHP applications" in which the author states that he sometimes comes across third party code that he believes exhibit features that were "customary in the 90's". One such feature is applications not having a single point of entry.

The author writes:

Back in the days where the rewrite module was an uncommon luxury creating multiple .php files in the webroot was the standard.

Well, that's because it is the very purpose of the architecture. The web server IS the single entry point, you're not supposed to put yet another layer of complex redirection on top of that. You're supposed to put multiple files in the webroot.

I cannot say it any better than Rasmus Lerdorf (the inventor of PHP) has said it:

Just make sure you avoid the temptation of creating a single monolithic controller. A web application by its very nature is a series of small discrete requests. If you send all of your requests through a single controller on a single machine you have just defeated this very important architecture. Discreteness gives you scalability and modularity. You can break large problems up into a series of very small and modular solutions and you can deploy these across as many servers as you like.

Even if you're working on a web application that's never going to need to scale up to multiple servers, you're only adding layers of complexity where the application can break (which is something we see every day), and simulating yet another single entry point makes the application noticeable slower. That is one reason why most frameworks are notoriously inefficient and horrible. The more you abstract away from the core, the less efficient it becomes.

Some people state that it is all about being DRY (Don't Repeat Yourself). That if you have multiple php files (or whatever programming language you use for web development) handling requests you will have duplicated code.

Having multiple files handling requests has nothing to do with code duplication, it all depends on how the application is designed, and how the separate structures are bundled together.

Other people talk about encouraged separation of "business logic" and "layout logic", unified access to the objects for database queries, service calls, etc. But that has absolutely nothing to do with a single entry point in the web application what so ever. Separation of logic can be handled in numerous ways without using a single entry point.

Another author on an article called "Why does PHP suck?" states:

PHP's "drop 'n run" concept also has a lot of shortcomings. Sure, it's nice to just drop a script in a folder on the web server and have it run. That is, until you realize that now you have an infinite number of entry points into your application, even though you just need one. If you take a look at the big boys like WordPress, you'll see that all requests are being redirected to a single entry point and then dispatched further - which is how it should be.

Looking at how the so-called "big boys like WordPress" are doing things, are really bad advice! What "big boys" are doing, what "popularity" or the "majority" calls for, has NEVER been a guideline for correctness.

Re-writing requests by the usage of another layer of complexity not only defeats the purpose of the architecture, but it also seriously effects the efficiency of the application even on modern hardware.

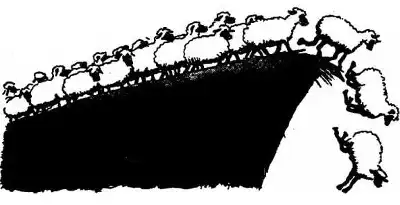

Religiously following the "dos and don'ts" of the "web sheep pack" will without a doubt make you embark on a wild goose chase, trying to perfect something that has now become inherently imperfect - or just plain wrong.